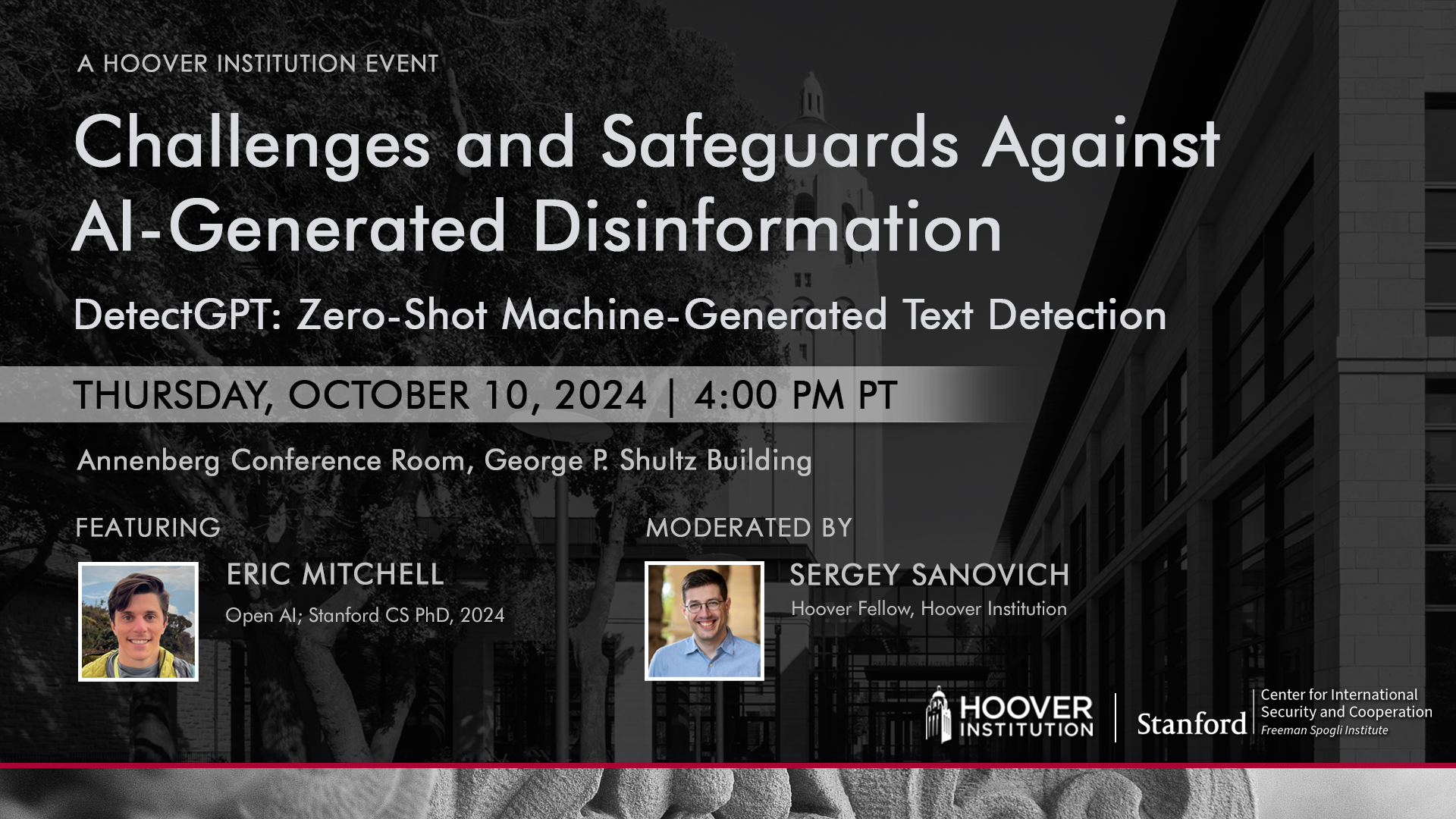

The first session of Challenges and Safeguards against AI-Generated Disinformation discuss DetectGPT: Zero-Shot Machine-Generated Text Detection with Eric Anthony Mitchell and Sergey Sanovich on Thursday, October 10, 2024 at 4:00 pm in Annenberg Conference Room, Shultz Building.

Read the paper here.

ABOUT THE SPEAKERS

Eric Mitchell is a researcher at Open AI. He defended his PhD at Stanford’s CS department under Chelsea Finn and Christopher D. Manning in June 2024. His research if focused on improving the trustworthiness of foundation models. In particular, he worked on making language models more factual, up-to-date, and able to understand user intent. He is also interested in scalable oversight as well as improving the reasoning & planning abilities of language models. His graduate studies were supported by a Knight-Hennessy Graduate Fellowship and a Stanford Accelerator for Learning grant for Generative AI for the Future of Learning. In the summer of 2022, he was a research scientist intern at DeepMind in London, where he spent four months working with Junyoung Chung, Nate Kushman, and Aäron van den Oord. Before PhD, he was a research engineer at Samsung’s AI Center in New York City, where he worked with Volkan Isler, Daniel D. Lee. As an undergraduate, he completed his thesis in the Seung Lab at the Princeton Neuroscience Institute.

Sergey Sanovich is a Hoover Fellow at the Hoover Institution. Before joining the Hoover Institution, Sergey Sanovich was a postdoctoral research associate at the Center for Information Technology Policy at Princeton University. Sanovich received his PhD in political science from New York University and continues his affiliation with its Center for Social Media and Politics. His research is focused on disinformation and social media platform governance; online censorship and propaganda by authoritarian regimes; and elections and partisanship in information autocracies. His work has been published at the American Political Science Review, Comparative Politics, Research & Politics, and Big Data, and as a lead chapter in an edited volume on disinformation from Oxford University Press. Sanovich has also contributed to several policy reports, particularly focusing on protection from disinformation, including “Securing American Elections,” issued by the Stanford Cyber Policy Center at its launch.

ABOUT THE SERIES

Distinguishing between human- and AI-generated content is already an important enough problem in multiple domains – from social media moderation to education – that there is a quickly growing body of empirical research on AI detection and an equally quickly growing industry of its non/commercial applications. But will current tools survive the next generation of LLMs, including open models and those focused specifically on bypassing detection? What about the generation after that? Cutting-edge research, as well as presentations from leading industry professionals, in this series will clarify the limits of detection in the medium- and long-term and help identify the optimal points and types of policy intervention. This series is organized by Sergey Sanovich.